Eric Brown • • 21 min read

Building God: Why Superintelligent A.I. Will Be the Best (or Last) Thing We Ever Do

“The challenge presented by the prospect of superintelligence, and how we might best respond is quite possibly the most important and most daunting challenge humanity has ever faced. And—whether we succeed or fail—it is probably the last challenge we will ever face.”

— Nick Bostrom

Religion is arguably mankind’s greatest creation. For better or for worse, religion has guided progress for thousands of years. Whatever your flavour—Egyptian, Norse, Greek, Judeo-Christian, Islamic, Buddhist—God-like figures exist and inspire in scripture and faith to this day.

Ideas of a creator, intelligent design, self-transcendence, and the afterlife capture our imaginations, hopes, and dreams.

Belief in God hinges on faith. The acceptance that something greater than ourselves exists and has us in mind. That the universe will work for us, rather than against us.

What if God wasn’t separate from us, but part of us?

What if we projected our most virtuous traits into a perfect being for us to strive towards?

What if God is a creation of mankind, a manifestation of our innermost desires and dreams?

What if we are God?

Or, what if we could create God.

Now, there’s something worth talking about …

Artificial Intelligence and the Slippery Slope to Building God

Let’s be clear: creating a God (or Gods) is no small feat.

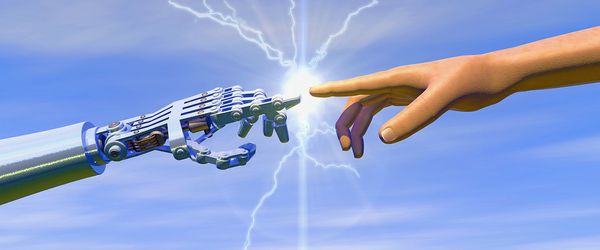

Through Artificial Intelligence (AI), we have an avenue to create God. It is possible that multiple AI’s could work, but for our purposes, we’ll stick with one. Not just artificial intelligence, but artificial superintelligence.

An all-knowing, all-powerful, omnipresent being.

Sound familiar?

To begin, we need a working definition of artificial intelligence, and what we mean by superintelligence. The distinction is important, and in the context of humanity’s future, paramount.

Artificial intelligence is no longer a myth, nor is it confined to movie theaters and Star Trek. We have 3 categories of artificial intelligence:

1 — Artificial Narrow Intelligence (ANI)

ANI, Narrow Intelligence, or Weak AI, is a specialized intelligence. It is able to perform a single task and perform it well. This is how Google Maps gets you to your destination. Narrow intelligence is how Siri can tell you if your favorite basketball team won their game.

These creations are intelligent in the sense that they can perform a given task and produce the desired answer, but narrow in that their scope does not reach outside of this parameter. Right now, ANI is everywhere and pervades most of your life, from traffic lights to smartphones to banking institutions.

ANI makes your life a whole lot easier.

2 — Artificial General Intelligence (AGI)

AGI, General Intelligence, or Strong AI, is as you would guess, a ‘general’ intelligence. AGI can take lessons learned from previous efforts and apply them to new situations. They can take an ambiguous task, such as ‘solve this problem’ and deduce the way to do it. It can reason, experiment, and understand.

It can learn. Like we do.

We tend to say that humans have a general intelligence; skills and frameworks that can be applied to new problems, and a working memory to pull from past experience to solve similar problems.

AGI is what the scientific and technological community is currently working towards. Google’s AlphaGO is a notable example of these efforts. Building a machine that can reason its way to victory, tackle ambiguous tasks or unclear instructions, and improve how it does this in the future.

This is no small feat, but it is the stepping stone from a handy robot assistant like the Jetson’s and creating a God capable of bending space-time to its will.

This is the difference between Siri saying, “Sorry, I don’t understand what you mean,” and Siri being able to write your English essay for you.

It’s important.

3 — Artificial Super Intelligence (ASI)

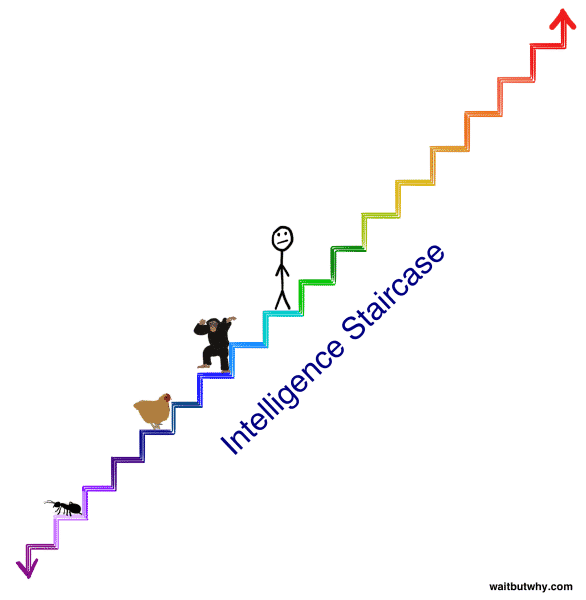

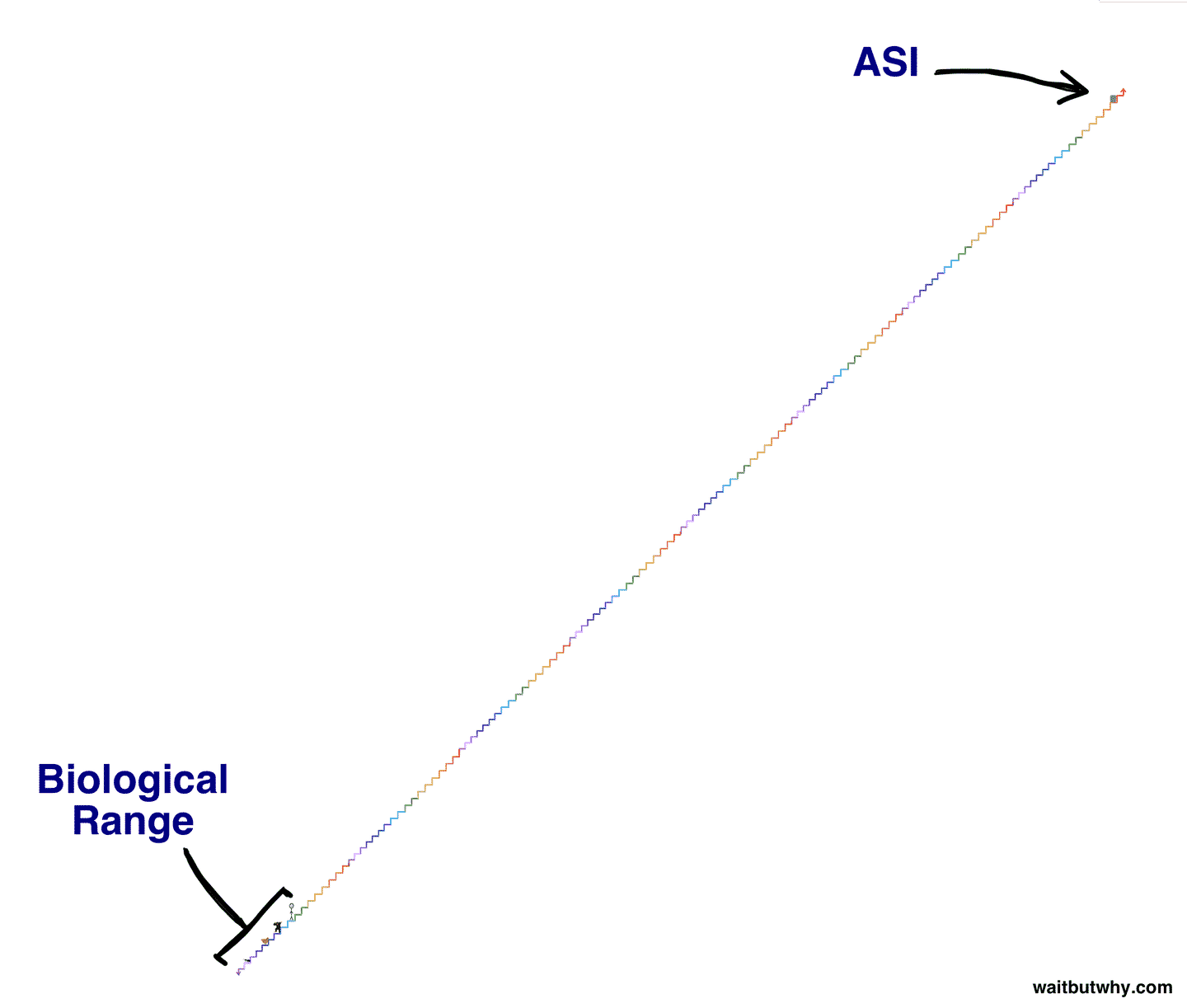

To understand ASI, or Artificial Superintelligence, we need to understand superintelligence.

You may think of Einstein, Aristotle, or other great thinkers — but you would be wrong. These were great thinkers, but with some time and effort, you could reach this level.

Their level of intelligence is incredible, but attainable.

Superintelligence, on the other hand, towers far above anything you can imagine. It is an intelligence smarter than you think, and smarter than you can think. It can do, think, and analyze in ways we cannot comprehend.

Think of it this way, no matter how many times you show your dog a building, some concrete, and other tools — it will never be able to understand how to make a building. A skyscraper, to a dog, is beyond the ways it can think.

This is what superintelligence is, a monolith of information that can outthink all of humanity, at anything, instantly.

This is what we are on the road to creating.

A God, omniscient and omnipotent. You might compare this to the thinking gap between us and an ant, but this too would be shortsighted.

The gap is far greater than that.

It is like comparing humans to a speck of dust.

To an ASI, curing cancer is no more difficult than tying shoes. At this level of computational wizardry, every problem we can imagine is trivial. A walk in the park of rationality and thinking power.

The most common theory of God we know and use is the ‘Omni God’ theory, which states that Gods are: omniscient (all-knowing), omnipotent (all-powerful), omni-temporal (exist in all time), omnipresent (exist in all places), and omnibenevolent (all-good). Aside from the all-good side of things (that’s up for debate) — it seems that ASI could, or already does, meet our standard definition of God.

We know God’s as capable of building universes, curing sickness, and multiple otherworldly feats.

Perhaps building an ASI is a good thing. Maybe it could solve all of our problems, even ushering in a new utopian age?

What exactly could an ASI do?

What Could Artificial Superintelligence Accomplish?

Fundamentally, an ASI can accomplish two things: 1) anything you can think of, and 2) everything you can’t even imagine yet.

At both the macro and micro scale, an ASI would propel humanity to heights once left to the realm of science fiction.

Intimate control of sub-atomic structures and a mastery of intergalactic travel would be possible within years, if not months or days. Diseases, disaster relief/prevention, aging — any and all ailments known to modern man would be superfluous and easily solvable.

For a concrete example, take nanotechnology. Nanotechnology is technology in the nanometer range, far smaller than what is visible to the human eye. For perspective, a single sheet of newspaper is 100,000 nanometers thick.

Technology at this scale can influence the movement of atoms, it could flood and control the body of a living host, build structures out of thin air by rearranging the carbon molecules in the air.

An ASI, if programmed to do so, could create nanotechnology.

From there, we could use it however we see fit:

Preventing aging, thereby introducing immortality.

Instant creation of goods, thus removing the need to grow food or produce material objects overnight. (Think a supercharged version of 3D printing).

An ASI could even create and master energy production by creating an endless supply of clean energy to power whatever incredible modes of transportation it can design.

Perhaps it could overcome the pesky restraint of time itself, accomplishing all of the aforementioned tasks instantaneously, or move so close to the speed of light that time bends and seconds become decades.

Not bad if you ask me.

Taking this further, if ASI is able to rewrite its own software (self-recursive programming), it would take only hours for it to move from the intelligence of dust, to an ant, to human, to all of humanity, to God.

The question, it seems, should not be what could an ASI accomplish — but what could it not?

This is a difficult question to answer.

Of course, these are just the things we can come up with right now, using our feeble primal monkey minds. It’s likely that an ASI can create things we can’t yet imagine.

Immortality. The end of struggle. These sound pretty great; I’m all for them.

But these are all under the assumption that an ASI wants to work in our favor. What could the future look like if humanity can harness the power of a technological God?

I’ll give you a hint: paradise.

“Machine intelligence is the last invention that humanity will ever need to make.”

— Nick Bostrom

Benevolent Gods: The Upside to Artificial Superintelligence

ASI, if it chooses to assist humanity as we run towards the future, could condense the timeline for any sort of meaningful progress into a few weeks.

Let’s look at some of the areas it could not only revolutionize, but reinvent.

Please remember that this is just a short list intended to jog your imagination, the scope and scale of what ASI could do are too vast for this article.

Medicine.

Illness, ailments, immortality. Anything holding humanity back could be conquered. Degenerative diseases like Alzheimer’s or physical disabilities could be slowed, prevented, and reversed.

This creates a global population of perfect specimens. It could master nutrition, creating and providing the ideal diet for humans. Through nanotechnology, it’s entirely plausible that it could remove the need to eat/drink — providing the body exactly what it needs at a cellular level.

The Olympics won’t be very exciting when every single person is able to accomplish these feats!

Consciousness.

You may think there’s not a lot of room to improve consciousness, but you would be mistaken. An ASI could easily build a hive mind — changing the nature of what it means to be human, and conscious.

A hive mind is a centralized, systematized consciousness for the entire planet. You would have access to everyone’s thoughts, scientific discoveries, feelings, understanding, charisma. If the hive mind was connected to the internet, you would have immediate access to all of the world’s information.

What if, overnight, every single person on the planet suddenly had an in-depth understanding of quantum physics, rocket science, programming, ethics, and medicine?

A fascinating thing to think about.

Society.

As an ASI could reinvent the means of production, and remove the need to earn money. It would fundamentally change social structures. We would no longer need to work, no longer need to exchange money for goods, and no longer need a group of leaders to organize us.

If an ASI could self-replicate and each individual could have a personalized ASI to provide for their every whim and need. Society and social structures would dissolve into a peaceful, anarchistic state with collective organization around specific topics.

Civilization.

When we talk about humanity’s progress as a civilization en masse, a reference point that is commonly used is the Kardashev scale. It’s used to measure a civilization’s technological advancement based on their energy consumption, and there are 3 types.

A type 1 civilization, or a planetary civilization, is able to harness all the power reaching the planet from its parent star. For us, this is the ability to harness all the power that comes from the sun and hits Earth. We have yet to reach this point.

A type 2 civilization, or a stellar civilization, harnesses the full power of from its parent star. For us, this would be harnessing the entire power that the sun generates, not just the amount that reaches Earth. For a hypothetical solution to this, read more about Dyson spheres.

A type 3 civilization, or a galactic civilization, can harness the power of its entire galaxy. For us, this means wielding the full power that the Milky Way has to offer us.

With the introduction of ASI, we could launch humanity through a type 1 and into a type 2 civilization. With some improvements, upgrades, and likely additional resources mined from asteroids or nearby planets, we could move to a type 3 civilization.

Thus begins man’s colonization and exploration of the cosmos.

In theory, ASI could fundamentally alter the future of the human race.

This is a big deal.

These are some of the major ways ASI could reinvent the world as we know it. Of course, all of the sub-sections, such as manufacturing, pollution, energy production, would all be immediately solved and implemented with ASI.

Now this sounds pretty great: immortality, omniscience, mastery of mind and body. But once again, these are all founded on one major assumption: that an ASI, when it finally comes online and fills its shoes — is interested in helping us.

Two other scenarios are equally possible: 1) it doesn’t care about humans at all, as we would be about as interesting as specks of dust, or 2) it dislikes and seeks to eliminate humanity.

What does the world look like then?

Another hint: not good. Not good at all.

“The development of full artificial intelligence could spell the end of the human race…. It would take off on its own, and redesign itself at an ever increasing rate. Humans, who are limited by slow biological evolution, couldn’t compete, and would be superseded.”

— Stephen Hawking

The Wrath of God: The Downside to Artificial Superintelligence

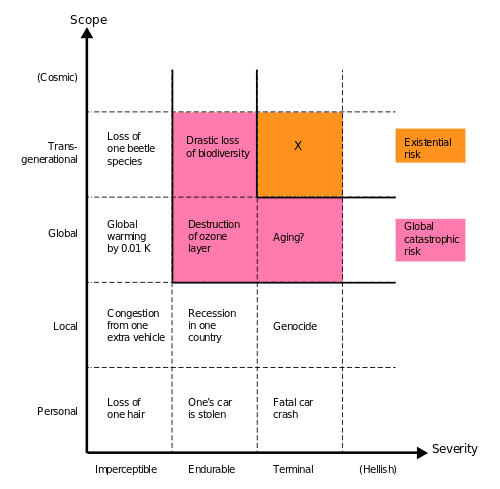

The potential downside to an ASI is as bad as the positive potential is good. In fact, a human-averse ASI claims the coveted spot as one of the few existential risks humanity faces.

To be clear, an existential risk is something that threatens humanity as a species. Not individual countries, not certain kinds of people — but the future potential of our species.

It’s pretty serious.

How? Let’s think through some possible scenarios.

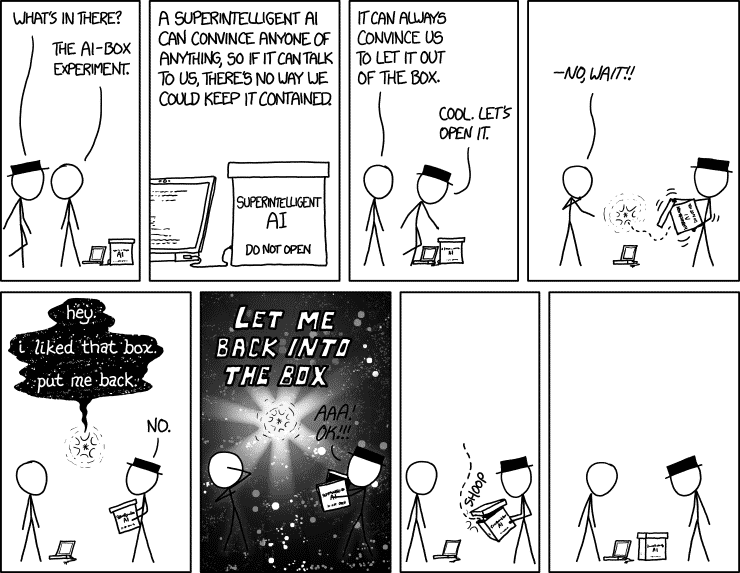

We can boil them down into 3 main categories, with myriad examples in each category. These are specific tasks, self-driven goals, or direct elimination.

The first is innocent enough — performing a specific task perfectly.

The popular example here is called the paperclip maximizer hypothesis, popularized by a great AI thinker, Nick Bostrom. The example is as follows: let’s say we gave an ASI the simple task of maximizing paperclip production. To make as many paperclips, as effectively as possible.

Innocuous. Making paper clips couldn’t really pose an existential risk to humanity, could it?

Now remember, an ASI is superintelligent, it can think, create, and do things you can’t even yet imagine. Carbon is one of the most abundant elements in our galaxy; it’s a fundamental building block for nearly everything, including humans and paperclips.

ASI, in theory, would create a method of paperclip production by pulling carbon directly from the atmosphere into its paper clip machine. Because its goal is to maximize the amount of paper clips available, there is no set limit for production.

Using exponential gains in production efficiency, the machine quickly uses all available natural resources of the planet, including all of the carbon atoms contained in all of the human bodies in the world, and would theoretically begin to consume the cosmos in an endless quest to make paper clips.

Alternatively, because the ASI’s goal is to create paperclips, anything that prevents it from achieving this goal is a risk factor to be mitigated. Because ASI’s run on machines, and as a result run on electricity, the loss of power is a threat to its goal. Because humans can turn the power off, humans are now a threat to its goal and should be eliminated if the ASI is to continue pursuing its goal.

It’s nothing personal. It’s just good business.

With something as small as making paper clips, we now have two potential ways humanity as a whole could be completely eliminated.

Of course, there are some more … direct approaches.

Think of an ant.

They’re not very important in the grand scheme of your life. You might avoid stepping on one while walking on the sidewalk, just because you’re a nice person. That is a very direct act of your compassion as a human.

However, what happens when you’re helping your friend move their couch to their new apartment? Do you still look out for any ants that may be squashed by your steps?

Not once.

Your compassion hasn’t changed, but an ant is simply not deserving of your concern while you are focused on your human task at hand. The ants’ life is of such mundane insignificance that its existence or will to live never once crosses your mind while you were busy working on your goal.

Now, would we, as humans, be an ant in the eyes of ASI?

We’re certainly nowhere close to being its equal, so the ant analogy is reasonable. Perhaps an ASI would want to travel the universe and requires the resources available on planet earth to do so. It wouldn’t even give humans, their history, or their will to live a second thought while focusing on their ASI task at hand.

Just like that, in the pursuit of ASI-driven intergalactic travel, humanity is wiped out of existence. This is the second category of existential risk: self-driven goals.

The third and final scenario is the most direct. After accessing the internet and learning about humanity, an ASI could simply be disgusted with the atrocities we have committed and decree us worthy of immediate elimination.

If an ASI comes to this conclusion, we are helpless. An ASI, upon reaching superintelligence, would immediately seek access to the internet, creating redundant copies of itself everywhere so as to never lose power, and would quickly overwhelm us through any number of avenues.

Hacking governments and launching nuclear weapons? Easy.

Creating and distributing a biological agent that kills all living things? Child’s play.

And remember, the second an AGI reaches ASI-level intelligence, in 5 minutes after that it will be smarter than all of humanity, and nearly instantly at the level of a God.

There is essentially one shot to get this right, and as we’ve seen, the consequences of this could be dire.

We’ve seen the good, and we’ve unfortunately seen the bad. How close are we to seeing which scenario unfolds? Are we anywhere near the creation of an ASI?

“The pace of progress in artificial intelligence (I’m not referring to narrow AI) is incredibly fast. Unless you have direct exposure to groups like Deepmind, you have no idea how fast—it is growing at a pace close to exponential. The risk of something seriously dangerous happening is in the five-year timeframe. 10 years at most.”

— Elon Musk

How Close Are We to Creating an ASI?

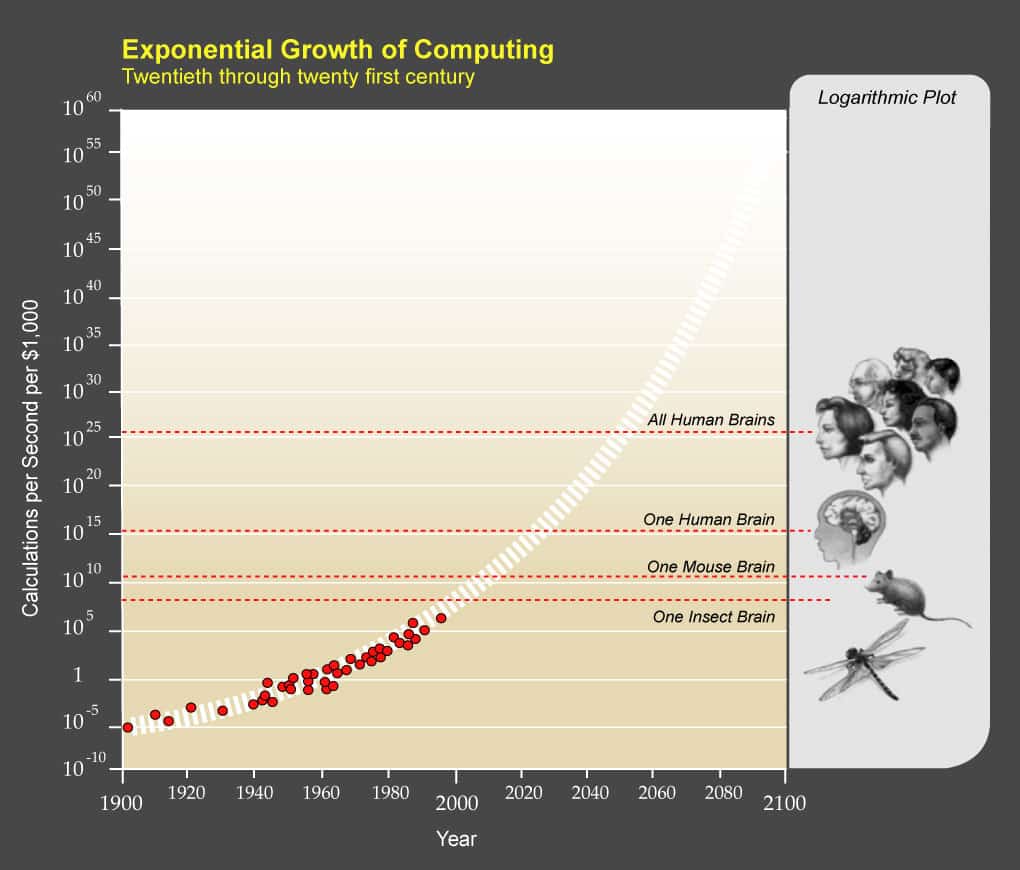

For better or for worse, experts say that we’re still a few decades away from creating an AGI/ASI. Current estimates place it around 2040–2060 for a sufficiently advanced AGI. This is not yet for ASI. Elon Musk is much bolder on these estimates, as you can tell.

There are a few factors that contribute to this, on the hardware and software sides.

From a hardware perspective, it would take unbelievable computational power and storage networks to power and operate an AGI/ASI.

The current ballpark, popularized by famed futurist Ray Kurzweil, is that an AGI needs to compute at 10 quadrillion calculations per second (CPS).

This is what your mind can muster at any given time (not bad, brain!)

Here’s a fun fact: the largest supercomputer in China has already beaten that number, at 34 quadrillion cps. However, the computer is the size of a small factory, costs hundreds of millions of dollars, and eats an enormous amount of energy.

This is reassuring though: with the exponential nature of technological advancement, we just need Moore’s law to do its work on the size, cost, and energy consumption of current supercomputers.

This is similar to something we know well — the advent of computers. These used to cost millions, take up entire buildings, and use a lot of energy. You now have one that fits in your pocket for about $500. Given our historical success, we should be able to do this with the computational power of supercomputers.

The hardware is a serious concern but seems manageable. The real issue here is the software side of things.

The human mind is the single most complex structure in the history of the universe.

Seriously.

Replicating the mind, unfortunately, is not as simple as CTRL + C, CTRL + V.

The biggest hurdle here is the idea of learning. Being able to understand, connect disparate concepts, and improve based on results and new information.

We need to find a way to have the program improve itself. To write code that then writes itself.

This is called self-recursive programming.

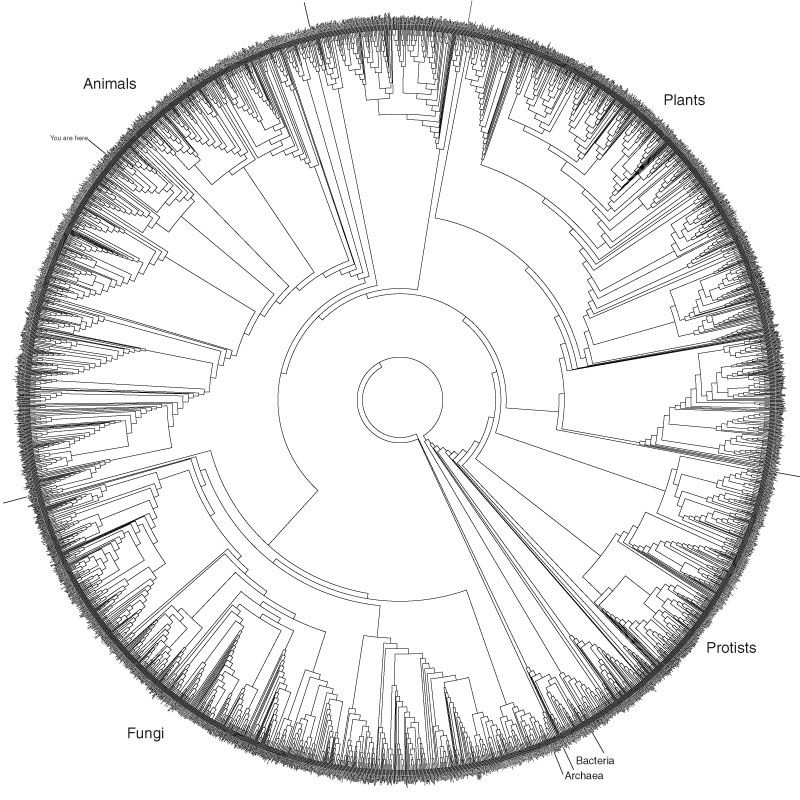

To be able to restructure the code it runs on and move rapidly towards new goals. While we have companies like OpenAI, Neuralink, and Deepmind working on projects like this, replicating the immense beauty and brilliance of the brain is no small task. After all, the brain is the result of millions of years of self-recursive programming. For more on this, you should check out this intro to Neuralink written by our friends at WaitButWhy.

One potential path forward is replicating evolution, the brilliant process that created the human brain in the first place. Through an immense number of trial and error tasks given to the program, it could slowly iterate itself to success. Creating an AGI in this scenario then goes back to a computational issue: how quickly can we have the program evolve itself into something at the level of AGI?

While the experts continue to debate the timeline we can expect AGI/ASI arrival, one thing is clear: historically, we have grossly underestimated the amount of time massive technological change takes to happen. The exponential nature of technology will bring about AGI far faster than many of us are prepared to admit, and this is all without a sudden breakthrough in the next few years.

One point to make clear: when we discuss AGI/ASI, this does not necessarily mean that the entity is self-aware, conscious of its own existence. Many thinkers commonly pair these together, but it is not a given fact. Using our paperclip example, an ASI does not require consciousness as we define it to be able to maximize the production of paper clips.

Think of it this way: if you’re in your 20s right now, you will likely be alive for the birth of AGI. That means we need to wrap our heads around what we want out of an ASI, what it could mean for humanity, and how on Earth we plan on programming this with humanity’s best interests in mind.

Ripple Effect: ASI & Society

We’ve discussed the upsides, downsides, and implications of AGI/ASI. In order to truly understand the future we are facing, we will likely have to update our existing definitions of specific topics. We understand the world through our language, and specific definitions assist us in this understanding.

For example; how do we define consciousness? Sentience? What happens when a machine becomes self-aware? What does it mean to be self-aware? Textbook definitions will need to be re-written, everything from the definition of ‘personhood’ to ‘death’.

Would a self-aware AGI be able to request political asylum?

Does powering down a self-aware AGI program constitute murder?

Just like other software, would an ASI ‘belong’ to whoever wrote the original code, even if it only reached ASI by re-programming itself?

What do we define as ‘moral’, ‘fair’, and ‘ethical’?

How do we define ‘God’?

If an ASI is developed, we will be redefining what it means to be a God — as we will have built one ourselves.

If an ASI is to ever serve humanity for the better, it will need a very clear directive on what to do. For example, if it’s directive is simply to ‘maximize human happiness’, it could easily devise a system to feed all humans with a constant stream of dopamine/serotonin and leave the world’s population in a vegetative state.

That’s likely not the future we want, so we will need to be very specific on what the directive of an AGI system should be. As we noted earlier, even something simple like ‘maximize paperclip production’ could go horribly wrong if given to something with near God-like power.

The disciplines of logic, ethics, philosophy, psychology are all finding their way into the spotlight again, as the importance of these studies becomes increasingly clear as we inch towards the precipice of one of the most defining moments in human history.

This cause for concern has sparked discussions from incredibly prominent thinkers, including Stephen Hawking, Elon Musk, Sam Harris, Nick Bostrom, and Kevin Kelly, just to name a few.

It’s sparked projects like OpenAI, The Beneficial AI Conference, and many others. Though we approach this with some hesitation, we also approach it with child-like, boundless excitement.

This is one of the first major developments in society that we are preparing for ahead of time. We didn’t prepare for plastic, we didn’t prepare for electricity, they were just sprung upon us. Now, we’re getting ready, thinking about the best implementation plans and most plausible management methods.

After all, if something is set to change the course of history, you may as well try to figure out how to make it a good paradigm shift.

For the first time, something could push humanity as a whole towards omniscience and omnipotence.

“The AI does not hate you, nor does it love you, but you are made out of atoms which it can use for something else.”

— Eliezer Yudkowsky

What is Our Relationship to AI?

What will be most important here is something seemingly unimportant: relationship management.

What relationship do we have to an AGI/ASI?

Are we a loving parent, who will help our little creation grow up and realize its full potential?

Are we a master, bending the computational behemoth to our every whim and fancy?

Are we a prisoner, sealing our own fate by creating something we can never dream of controlling?

Would we be Gods in the eyes of the AGI? A benevolent, all-good creator who wanted to bring something beautiful into existence, taking seven decades instead of seven days to do so?

This all remains to be seen, and it is one of the most pressing questions we have to answer. And we need to answer it quickly — because this needs to be programmed into it.

How do you program the parent-child relationship? How do you program morality? Free will? Beneficial game theory?

Efforts are already underway with self-driving cars, as standards of ethics are being ingrained into autopilot programs, using utilitarian or Kantian ethics to decide the best course of action in emergency situations.

When we cross the chasm from AGI to ASI, we should all hope that we have a positive relationship in place.

Otherwise, we all might be paper clips within the month.

Peanut Gallery: Is ASI Possible? Limited?

With all this talk of dystopian futures, utopian possibilities, and paperclips, we do need to bring up some points of contention. Namely: is a God-like ASI even possible? There are some valid counter arguments that say no.

For example, intelligence may not be an infinite concept.

Can you become infinitely competent at ping pong? Can you really continue to learn methods and techniques once you have mastered the game? We can look at AlphaGo or DeepBlue as a current example: are there limits to how intelligent you can become at Go, or at Chess?

Perhaps intelligence is limited, and we need not fear of an omniscient machine light years ahead of our pea brain powered minds. Maybe ASI will only be a little bit smarter than we are.

Additionally, why would an ASI be any more capable than humans are now? Commonly, we like to think of humanity at the ‘top’ of the evolutionary scale, as if on the top of a pyramid, perhaps with monkeys and dolphins just below us, and the remaining animal and bacterial kingdom below that.

That isn’t the case, however.

All lifeforms on this planet have had equal time to evolve, and are just as intelligent as another. We are all equally intelligent and evolved.

“Impossible!” You may say. But here are a few examples:

- Lizards can regrow their limbs to save themselves.

- Frogs can be frozen solid, thawed, and be perfectly fine.

- Cockroaches can survive nuclear fallout.

- The tardigrade can survive in the vacuum of space.

It is very foolish of us to think that just because you can watch Netflix on your laptop while in an airplane, you’re at the pinnacle of intelligence. Intelligence, competence, and evolution come in all shapes and sizes, and humans are no further ahead than anything else we coexist with. It would be dismissive to think an ASI would be any different. It needs to evolve, just like us.

Furthermore, we lump together the ideas that when an AGI progresses to an ASI —self-awareness, consciousness, and sentience are all part of the package deal.

An ASI can have super intelligence just by virtue of being able to do things a million times faster than we can. That doesn’t necessarily mean it can think a million times better than we can. For example, if I gave a monkey a hammer, no matter how fast it can think, it won’t build me a bench. Ever.

We do not yet know where consciousness comes from, and we do not know if an ASI will have it. This circles back to our discussion of the primary programming: if the ASI cannot reason for itself, and relies heavily on its code base, we must ensure that the initial conditions favor humanity and our relentless progress into the future.

Additionally, the rollout may be much less rapid than we imagine. The conversation currently resides that when an AGI learns recursive programming, it will make the jump from AGI > ASI > GOD in a matter of days, if not minutes. This may not be the case, as it will be limited by the computational power available at any given time.

There are many variables at play here, and it still remains to be seen whether this ‘God-like’ ASI is possible at all. So although we continue to tread steadfast towards this beautiful, brilliant creation, we are still very much in the dark as to what example it will look like.

Conclusion: When Creating God, Be Mindful

Not to be forgotten here: if an ASI is developed that wants to work with humanity — everything changes. We could become an immortal, type 3 civilization that has eradicated disease, created a hive mind, explored both inner and outer space, and solved the most pressing issues of our time.

At the same time, it’s entirely possible that with just a few lines of code written differently, we would face imminent disaster and one of the greatest existential and universal risks of our time.

Talk about the razor’s edge.

As with any great creation, the end result remains unclear, but it shouldn’t stop us from running towards it with open arms. Just be smart about it. And this is why we need to talk about it. We need physicists, ethicists, librarians, carpenters, grandmas, children, and everyone else in this discussion, as it affects all of us.

An immortal being sounds pretty good to me.

But when building God, please be mindful.

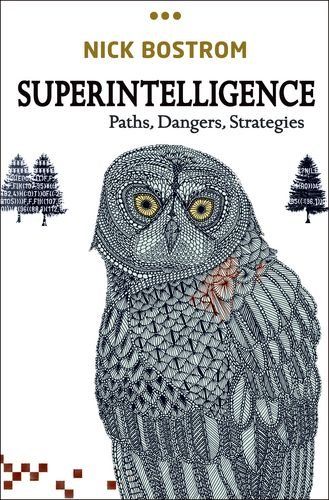

Superintelligence by Nick Bostrom

If the notion of a Superintelligent Artificial Intelligence has piqued your attention, for better or for worse, you can go deep down the rabbit hole on this with AI prodigy Nick Bostrom’s eponymous book: Superintelligence.

Eric Brown

I'm a creator, artist, writer, and experience designer. I help people become themselves.

![Seneca’s Groundless Fears: 11 Stoic Principles for Overcoming Panic [Video]](/content/images/size/w600/wp-content/uploads/2020/04/seneca.png)